If you’ve opened up Google Analytics recently and thought, “this doesn’t look right” — you’re not alone.

Over the past few months, we’ve been seeing a strange (and pretty consistent) pattern across a wide range of client accounts. What started as a small anomaly in October quickly turned into something much more widespread.

Let’s break down what’s actually happening, what we found, and what you should do about it.

The issue: what we’re seeing

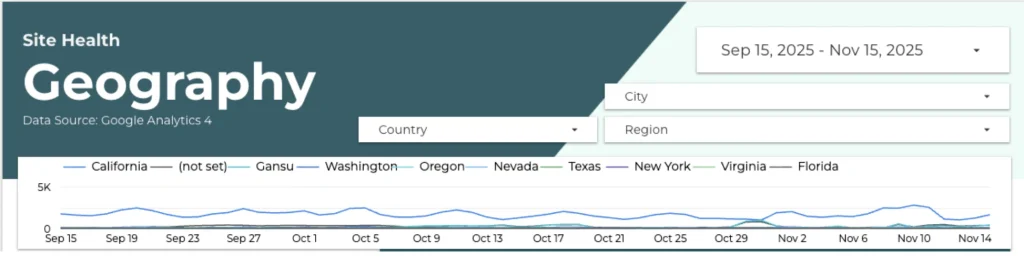

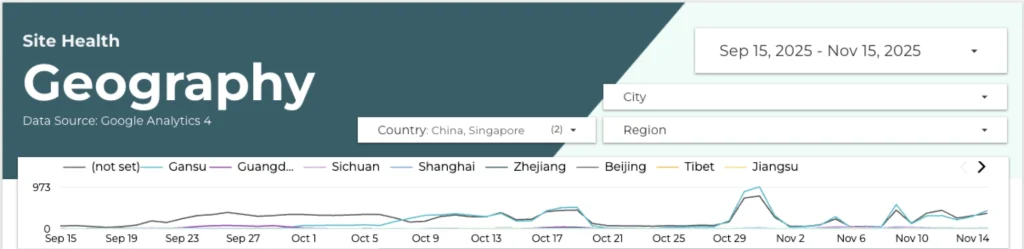

Around late September / early October, we started noticing unusual spikes in direct traffic across multiple client websites.

At first, it looked isolated. But after reviewing accounts more broadly, a pattern emerged:

- Traffic was coming from China, Singapore, and “(not set)” locations

- It appeared across the vast majority of clients

- It was hitting almost every page on site

- Engagement was extremely low:

- Near-zero time on page

- No meaningful interaction

- Near-zero time on page

In short: lots of traffic, very little value.

Sessions Over time by region

Unfiltered

Filtered

What stood out immediately

When we dug deeper, a few things became very clear.

1. Geography patterns

A large portion of this traffic was coming from specific locations like:

- Lanzhou, Gansu (China)

- Shanghai, Shanghai

- Singapore

- “(not set)”

These aren’t random — many of these locations are known to host data centers.

2. Device and technical fingerprints

The traffic shared very consistent technical characteristics:

- Device: Desktop

- Browser: Chrome

- Screen resolution: 1280 × 1200

- Operating system: Often Windows 7

That level of uniformity is unusual for real users.

3. Behaviour that doesn’t look human

This is where it becomes obvious:

- Users visiting 30+ pages per hour

- Crawling large portions of the site

- Average time on page ≈ 0 seconds

That’s not browsing — that’s automation.

4. Scale relative to site size

The spikes weren’t identical across all clients, but they scaled proportionally:

- Bigger sites → bigger spikes

- Smaller sites → smaller spikes

Which suggests this isn’t random noise — it’s systematic.

What’s actually happening?

All signs point to bot / crawler traffic.

More specifically:

- Automated systems crawling websites at scale

- Likely tied to data scraping, indexing, or AI-related processes

- Increasingly sophisticated, making them harder to filter out by default

This isn’t new — but it is becoming more common and more convincing.

What we’re doing about it

We’re not ignoring it — and we’re definitely not letting it skew reporting.

Here’s how we’re handling it internally:

- Identifying the most reliable signals to isolate this traffic

- Building filters to remove it from reporting

- Rolling out clean vs. unfiltered comparisons in Q4 reports

- Ensuring future reporting reflects real user behaviour only

Because ultimately, inflated traffic numbers mean nothing if they’re not real people.

What you should do

If you’re seeing similar patterns in your own analytics, here are a few practical steps to clean things up.

1. Filter by geography (when appropriate)

If your business doesn’t operate globally:

- Consider filtering out traffic from regions that are irrelevant

- Especially if spikes are coming from known data center locations

2. Exclude suspicious device signals

Certain environments are more likely to be bots:

- Linux-based systems (commonly used in servers and crawlers)

- Outdated operating systems (e.g. Windows 7 at scale)

That said, be careful — you don’t want to remove legitimate users unintentionally.

3. Build smarter audiences

Instead of only filtering data out, define what good traffic looks like.

For example:

- Exclude users who visit 30+ pages in a day (if unrealistic for your site)

- Include only users with session duration > 15 seconds

Just keep in mind: overly aggressive filters can also remove real users who bounce quickly.

4. Monitor spikes (and question them)

This is probably the most important one:

If something looks off, it probably is.

With the rise of AI-driven crawling and automation, data integrity is no longer guaranteed.

Regularly sense-check:

- Traffic spikes

- Sudden changes in engagement

- Unusual geographic trends

5. Consider infrastructure-level solutions

If this starts affecting performance or costs:

- Tools like Cloudflare Bot Manager can help mitigate traffic at the source

A quick reality check

Even as humans, we’re not always great at proving we’re human.

If you want a reminder, try this: https://www.worldshardestcaptcha.com/

(There’s a decent chance you’ll fail it.)

Our Final thoughts

This isn’t just a one-off issue — it’s part of a bigger shift.

As automation becomes more advanced, analytics platforms are increasingly mixing real users with non-human activity. That means:

- Cleaner data requires more effort

- Blind trust in metrics is risky

- And context matters more than ever

So if your traffic suddenly looks amazing overnight…

take a closer look.